What is LlamaChat

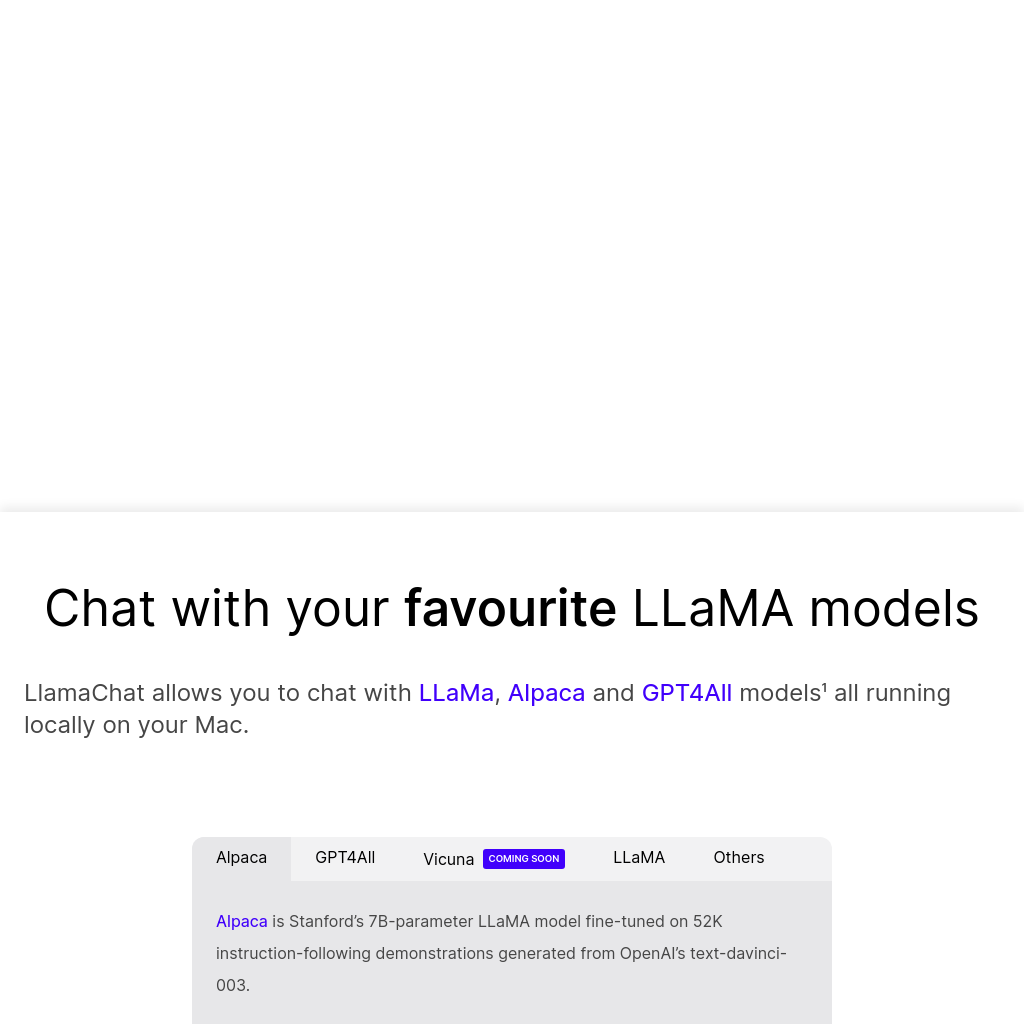

LlamaChat enables users to interact with various large language models such as LLaMA, Alpaca, and GPT4All directly on their Mac. These models run locally, ensuring privacy and offline accessibility. The application supports models like Alpaca, a 7B-parameter LLaMA model fine-tuned by Stanford for instruction-following tasks, and GPT4All, among others. LlamaChat is designed to be user-friendly, allowing easy import of model checkpoints and pre-converted files.

How to Use LlamaChat

- Download LlamaChat from the provided link or install via Homebrew.

- Import raw PyTorch model checkpoints or pre-converted

.ggmlmodel files. - Select the desired model (e.g., LLaMA, Alpaca, GPT4All) and start chatting.

Features of LlamaChat

-

Local Model Execution

Run LLaMA, Alpaca, and GPT4All models locally on your Mac, ensuring privacy and offline functionality.

-

Model Conversion

Import raw PyTorch checkpoints or pre-converted `.ggml` model files with ease.

-

Open-Source

Powered by open-source libraries like llama.cpp and llama.swift, LlamaChat is free and fully open-source.

-

Cross-Platform Compatibility

Built for both Intel processors and Apple Silicon, requiring macOS 13 or later.